A Unix timestamp is a simple numeric value that tracks the total number of seconds passed since January 1, 1970, at 00:00:00 UTC. Software systems use this universal, time-zone-independent standard to store dates efficiently and calculate time across different global platforms without any timezone confusion.

Understanding the Unix Epoch and UTC

The Unix Epoch—January 1, 1970, at 00:00:00 UTC—is the absolute starting line for Unix time. Every timestamp is just a running count of the seconds that have ticked by since that baseline moment. Servers, databases, and operating systems rely on this shared reference point to keep digital events synced around the world.

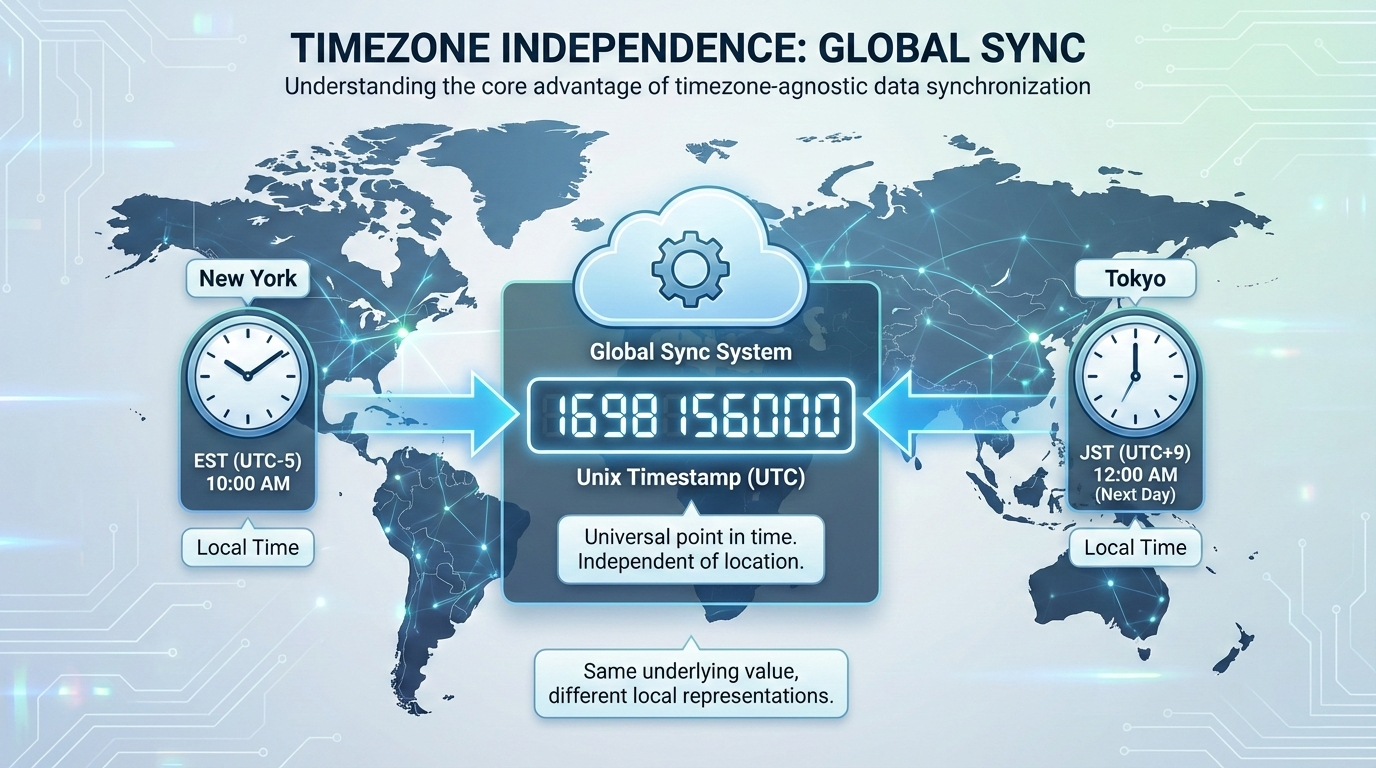

Because it relies on UTC (Coordinated Universal Time), Unix time ignores local timezones entirely. If a server in New York and a server in Tokyo generate a timestamp at the exact same moment, they output the exact same integer. This skips the headache of dealing with daylight saving time adjustments or regional offsets.

Computers also process raw integers much faster than text strings like “October 24, 2026, 10:00 AM EST.” Storing time as a single number speeds up database queries and keeps JSON API payloads light. When it’s time to show the date to a user, the frontend simply calculates the offset from the Epoch and applies the local browser’s timezone rules.

The Unix Epoch—January 1, 1970, at 00:00:00 UTC—is the absolute starting line for Unix time. Every timestamp is just a running count of the seconds that have ticked by since that baseline moment. Servers, databases, and operating systems rely on this shared reference point to keep digital events synced around the world.

Because it relies on UTC (Coordinated Universal Time), Unix time ignores local timezones entirely. If a server in New York and a server in Tokyo generate a timestamp at the exact same moment, they output the exact same integer. This skips the headache of dealing with daylight saving time adjustments or regional offsets.

Computers also process raw integers much faster than text strings like “October 24, 2026, 10:00 AM EST.” Storing time as a single number speeds up database queries and keeps JSON API payloads light. When it’s time to show the date to a user, the frontend simply calculates the offset from the Epoch and applies the local browser’s timezone rules.

You will usually see timestamps in one of two formats: 10 digits or 13 digits.

A 10-digit Unix timestamp counts standard seconds. This is the default format for most backend systems, relational databases, and Unix-like operating systems like Linux and macOS.

A 13-digit Unix timestamp counts milliseconds instead of seconds. JavaScript and many modern frontend environments use this format for higher precision event tracking by adding three extra digits. Converting between the two is just basic math: multiply a 10-digit timestamp by 1,000 to get milliseconds, or divide a 13-digit timestamp by 1,000 to drop back to seconds.

Cheat Sheet for Non-Developers

You don’t always need a converter to figure out roughly what year a timestamp represents. An average calendar year packs in about 31,556,926 seconds.

Adding 31.5 million to a timestamp moves it forward by roughly one year. For quick reference, a timestamp starting with 16 points to the early 2020s. If it starts with 17, you’re looking at dates spanning from late 2026 through the early 2030s. Recognizing these leading digits helps data analysts eyeball database rows and spot weird date ranges without writing custom conversion scripts.

How to Use Unix Timestamps in Programming Languages

Grabbing the current system time and formatting it correctly is a daily task in development. Here is how different Programming Languages handle their native time functions.

In JavaScript, running Date.now() gives you the current 13-digit millisecond timestamp. If you need the 10-digit version, use Math.floor(Date.now() / 1000). To turn that raw number into a readable ISO 8601 string, you can call new Date().toISOString().

Python relies on its time module. Running import time; time.time() returns a float of the current seconds. To convert that float into an ISO 8601 string, use datetime.datetime.utcfromtimestamp(time.time()).isoformat().

PHP keeps things straightforward with the time() function for a 10-digit timestamp. If you need milliseconds, you use microtime(true). To format that core PHP timestamp into an ISO 8601 string, use date('c', time()).

Relational databases have their own extraction commands. MySQL uses UNIX_TIMESTAMP(), while PostgreSQL relies on EXTRACT(EPOCH FROM NOW()). When sending these database records to an API, formatting the integers into ISO 8601 ensures mobile apps and third-party integrations can read the dates correctly.

What is the Year 2038 Problem (Y2038)?

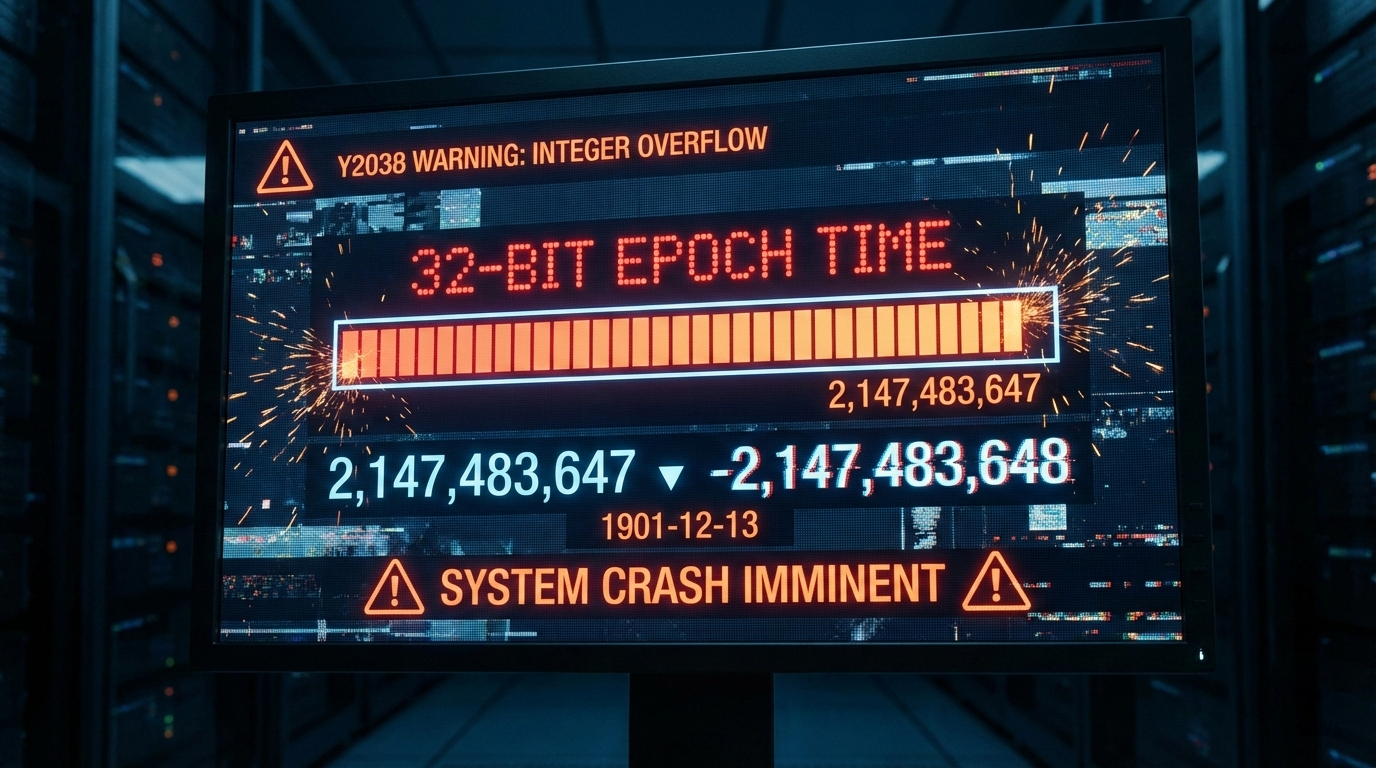

The Year 2038 Problem (Y2038) is a ticking clock for older systems that store time as a 32-bit signed integer. Because of strict binary limits, a 32-bit integer maxes out at exactly 2,147,483,647.

When the global timestamp hits that number, systems won’t smoothly roll over to the next second. Instead, the integer overflows and flips to a massive negative number (-2,147,483,648). Computers reading that negative value will suddenly think the date is December 13, 1901.

This overflow is scheduled to happen on January 19, 2038, at 03:14:08 UTC. If they aren’t updated, legacy apps, older servers, and embedded IoT hardware using 32-bit integers will face massive logic failures, corrupted databases, and total crashes.

Future-Proofing Your Database against Y2038

The only real fix for Y2038 is upgrading to a 64-bit integer architecture. A 64-bit signed integer pushes the next overflow event out by about 292 billion years—safely making it a problem for another era.

Database admins need to audit their tables and find any columns storing time as INT or INTEGER. Updating those columns to BIGINT immediately secures that 64-bit capacity. You will also need to check your application’s source code to ensure the backend variables can handle the larger 64-bit memory size without accidentally trimming the numbers.

How Do Systems Handle Leap Seconds?

Standard Unix time pretends every day has exactly 86,400 seconds. It completely ignores leap seconds to keep the math predictable. When an actual leap second is added to global clocks, a Unix timestamp handles it by simply repeating the 86,400th second twice.

Repeating a second is dangerous for distributed systems that rely on strict chronological order. It can crash financial databases or scramble transaction tokens. To get around this, major tech companies use a workaround called “Leap Smearing.” Rather than repeating a specific second, servers using leap smearing slightly stretch out the length of every second across a 24-hour period. The extra time gets absorbed seamlessly, and the system clock keeps moving forward without a hiccup.

FAQ

What is the difference between Unix time and Epoch time?

There really isn’t a practical difference; developers use the terms interchangeably. Technically, Epoch time refers to the starting baseline (January 1, 1970), while Unix time is the actual count of seconds ticking away since that moment.

Why do some Unix timestamps have 10 digits while others have 13 digits?

A 10-digit timestamp counts standard seconds, which is the default for most backend servers and databases. A 13-digit timestamp counts exact milliseconds. JavaScript and modern frontend frameworks use the 13-digit format to track high-precision events.

How does Unix time handle leap seconds?

It essentially ignores them. Unix time assumes every day has exactly 86,400 seconds. When a leap second happens, the standard timestamp just repeats the final second. To avoid issues with repeated seconds, larger enterprise systems often use “leap smearing” to gradually stretch the extra time out across a full day.

Why did the Unix epoch start specifically on January 1, 1970?

It was mostly an arbitrary choice. Back in the early 1970s, Unix engineers needed a recent, convenient date to start counting system time. January 1, 1970, offered a clean baseline that fit neatly into the tight memory limits of early computers.

Can a Unix timestamp be a negative number?

Yes, negative timestamps simply represent historical dates before the Epoch (prior to January 1, 1970, at 00:00:00 UTC). The system counts backward, letting software track past dates using the exact same logic.

Conclusion

A Unix timestamp acts as the backbone of timezone-independent timekeeping, steadily counting seconds since 1970. Turning complex dates into a single number makes database storage cleaner, processing faster, and cross-platform communication much more reliable.

If you are still running legacy systems, audit your database and code to ensure they support 64-bit architectures. Upgrading those limits now is the best way to future-proof your infrastructure and avoid the system crashes coming with the Year 2038 overflow.