Quick Summary

The Party That Celebrated a Billion Seconds On Septembe […]

The Party That Celebrated a Billion Seconds • What Is Epoch Time? The Definition That Changed Computing • The Digital Heartbeat: How It Works

The Party That Celebrated a Billion Seconds

On September 9, 2001, a group of programmers gathered in Copenhagen, Denmark, to celebrate a number. At exactly 01:46:40 UTC, the Unix timestamp reached 1,000,000,000 — one billion seconds since January 1, 1970. They called it the “Unix Billennium,” and they threw a party for an integer. It was, in its own quiet way, one of the most nerdy and wonderful moments in computing history.

That number has kept growing ever since. As of 2026, it is well past 1.7 billion, and it will not stop. This is Epoch Time — also called Unix Time — the system that tracks time by counting the total seconds elapsed since January 1, 1970 (UTC). It remains the backbone of global computing, though the industry is now in the final stages of a massive transition to 64-bit systems to address the looming “Year 2038” overflow.

What Is Epoch Time? The Definition That Changed Computing

According to Wikipedia, Unix Time measures how many “non-leap seconds” have passed since 00:00:00 UTC on Thursday, January 1, 1970 — a moment known as the Unix Epoch. The choice of that date was mostly convenience. When Unix was being developed at Bell Labs, engineers needed a clean starting point. Before POSIX.1 standardized it, early versions of Unix experimented with other dates like 1971 or 1972. Settling on 1970 gave the world a universal standard.

As author Douglas Adams famously joked, “Time is an illusion. Lunchtime, doubly so.” In the digital world, that illusion becomes concrete: a single integer that increments once per second, endlessly. By turning time into a number that just keeps going up, Unix Time removed the need for computers to perform complex calendar math for every basic task.

The Digital Heartbeat: How It Works

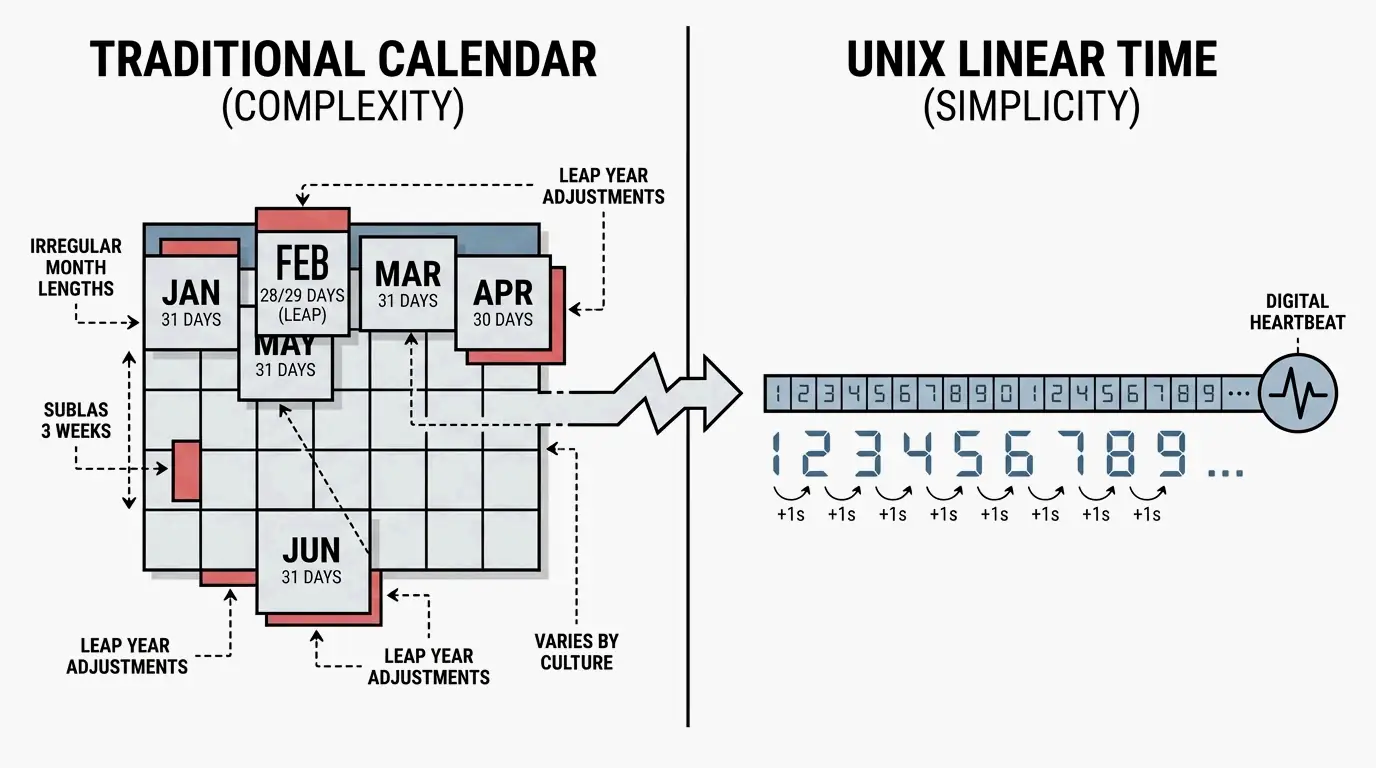

Think of the Unix clock as a “digital heartbeat.” Every day is exactly 86,400 seconds. While human calendars wrestle with months of different lengths and leap years, the Unix timestamp simply adds “1” to its total every single second.

This simplicity is why every major programming language uses it. Wikipedia notes that JavaScript’s Date library tracks time in milliseconds since the epoch. Modern file systems like APFS and ext4 use nanoseconds. The concept remains the same — a linear count that ignores the messy human calendar.

| Time Standard | Epoch Start | Counting Unit |

|---|---|---|

| Unix Time | January 1, 1970 | Seconds |

| JavaScript Date | January 1, 1970 | Milliseconds |

| Windows FILETIME | January 1, 1601 | 100-nanosecond intervals |

| GPS Time | January 6, 1980 | Seconds (continuous, no leap seconds) |

The 2026 Status: Solving the Year 2038 Problem

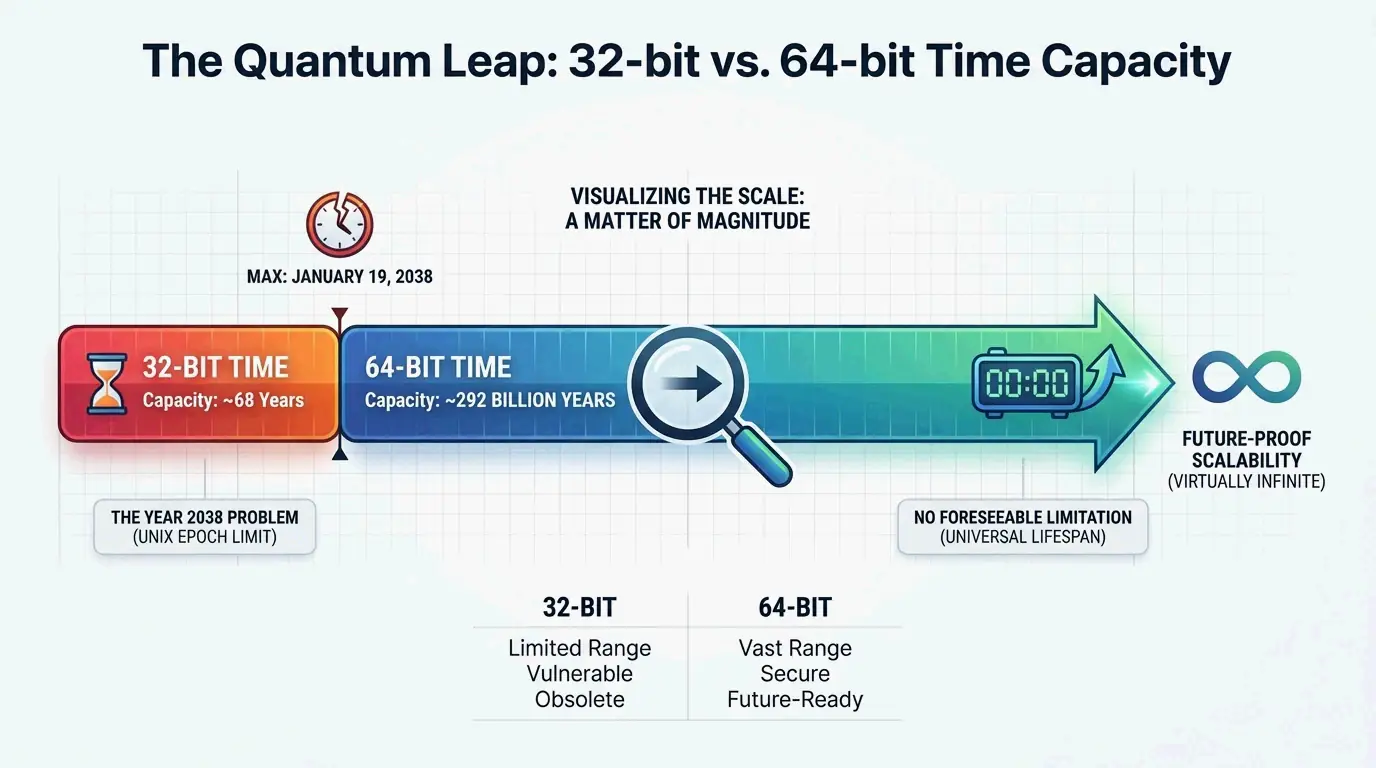

By 2026, the tech world is entering the home stretch of a massive infrastructure upgrade. The Year 2038 problem exists because older 32-bit systems can only count so high. The maximum value of a 32-bit signed integer is 2,147,483,647. According to Wikipedia, at exactly 03:14:07 UTC on January 19, 2038, these counters will run out of room and “wrap back” to 1901, crashing everything from bank servers to power grids.

In 2026, the fix is largely in place. Linux kernel updates and Windows system APIs have moved to 64-bit integers for time_t data types. This is a big deal: without it, any database storing dates past 2038 would simply stop working.

Why 64-Bit Is the Ultimate Fix

| Attribute | 32-bit | 64-bit |

|---|---|---|

| Maximum value | ~2.1 billion | ~9.2 quintillion |

| Date range | ~68 years | ~292 billion years |

| Overflow date | January 19, 2038 | Far beyond the solar system’s lifetime |

A 64-bit integer expands the trackable time range to approximately 292 billion years in either direction — twenty times longer than the universe has existed. Developers have essentially “future-proofed” the digital clock. While 32-bit systems were limited to a 68-year window, 64-bit systems ensure the clock will not overflow for as long as human civilization persists.

Leap Seconds: The Hidden Complexity

Even though Unix time is elegant, it has a quirk: it does not account for leap seconds. The POSIX standard mandates that a Unix day must always be 86,400 seconds. But Earth’s rotation is not perfectly consistent, so UTC occasionally adds a leap second to stay aligned with the planet.

When a leap second occurs, Unix time hits a discontinuity. To stay aligned with UTC, a system might repeat the same second twice or jump backward by one second. This makes Unix time different from International Atomic Time (TAI), which is a pure, uninterrupted count of seconds. Most modern networks use the Network Time Protocol (NTP) to synchronize clocks globally, smoothing over these discontinuities.

| Time Standard | Leap Second Handling | Behavior |

|---|---|---|

| Unix Time (POSIX) | Ignores | Repeats or skips seconds |

| UTC | Observes | Adds leap seconds as needed |

| TAI (Atomic Time) | Ignores | Pure continuous count |

From Mechanical Gears to Digital Epochs: A Clockwork History

The digital epoch is the latest chapter in a long history of timekeeping. The Antikythera mechanism, an ancient Greek device from the first century BCE, is the earliest known “clockwork” computer — used to track astronomical positions. That mechanical brilliance led to the geared clocks of medieval Europe and the pendulum clocks of the 1600s.

Today, this fascination with timekeeping shows up in unexpected places. The action RPG Clockwork Revolution, developed by InXile Entertainment, is set in a steampunk city called Avalon where time travel is the central mechanic. Players use a device called the Chronometer to rewrite history. Producer Brian Fargo noted that as of August 2025, the team had written 750,000 words of dialogue — a reminder that our obsession with “revolving” time bridges cold engineering and human imagination.

FAQ

What is the difference between Unix Time and GPS or Windows FILETIME?

Unix time counts seconds from January 1, 1970, and intentionally ignores leap seconds to maintain 86,400-second days. GPS time is a continuous count starting from January 6, 1980, that does not ignore leap seconds — it is now several seconds ahead of UTC. Windows FILETIME counts 100-nanosecond intervals from January 1, 1601, offering much finer granularity.

Why was January 1, 1970, chosen as the Unix Epoch?

The date was chosen arbitrarily by Unix creators Ken Thompson and Dennis Ritchie during early development in the late 1960s. They needed a convenient, round starting point for their time-tracking system. While early Unix versions experimented with 1971 and 1972, January 1, 1970, eventually became the official POSIX standard.

How does a 64-bit Unix timestamp prevent the Year 2038 problem?

The Year 2038 problem occurs because 32-bit signed integers cap at approximately 2.1 billion seconds, which will be reached in January 2038. A 64-bit integer increases capacity exponentially to over 9.2 quintillion, allowing time tracking for over 292 billion years — effectively ensuring the clock will never overflow within the lifespan of our solar system.

Conclusion

Epoch Time is more than a string of numbers — it is the universal language of the digital age. From its origin in 1970 to the ongoing 64-bit migration of 2026, Unix time has been a remarkably steady foundation for global computing. Developers should audit older systems for lingering 32-bit variables to ensure readiness for 2038. Meanwhile, the “clockwork” themes we see in culture — from the Antikythera mechanism to modern RPGs — remind us that timekeeping has always been a blend of cold engineering and human imagination.