Quick Summary

Epoch time, or Unix time, is a system that tracks time […]

Understanding the Core: What is Epoch Time and How Does It Work? • The Mechanics: How Unix Time Ignores Leap Seconds • The Developer’s Cheat Sheet: Converting Epoch Time in Modern Languages

Epoch time, or Unix time, is a system that tracks time by counting the total number of seconds that have passed since January 1, 1970, at 00:00:00 UTC. As of May 2026, it remains the standard way to sync data across global databases, APIs, and modern coding environments.

Understanding the Core: What is Epoch Time and How Does It Work?

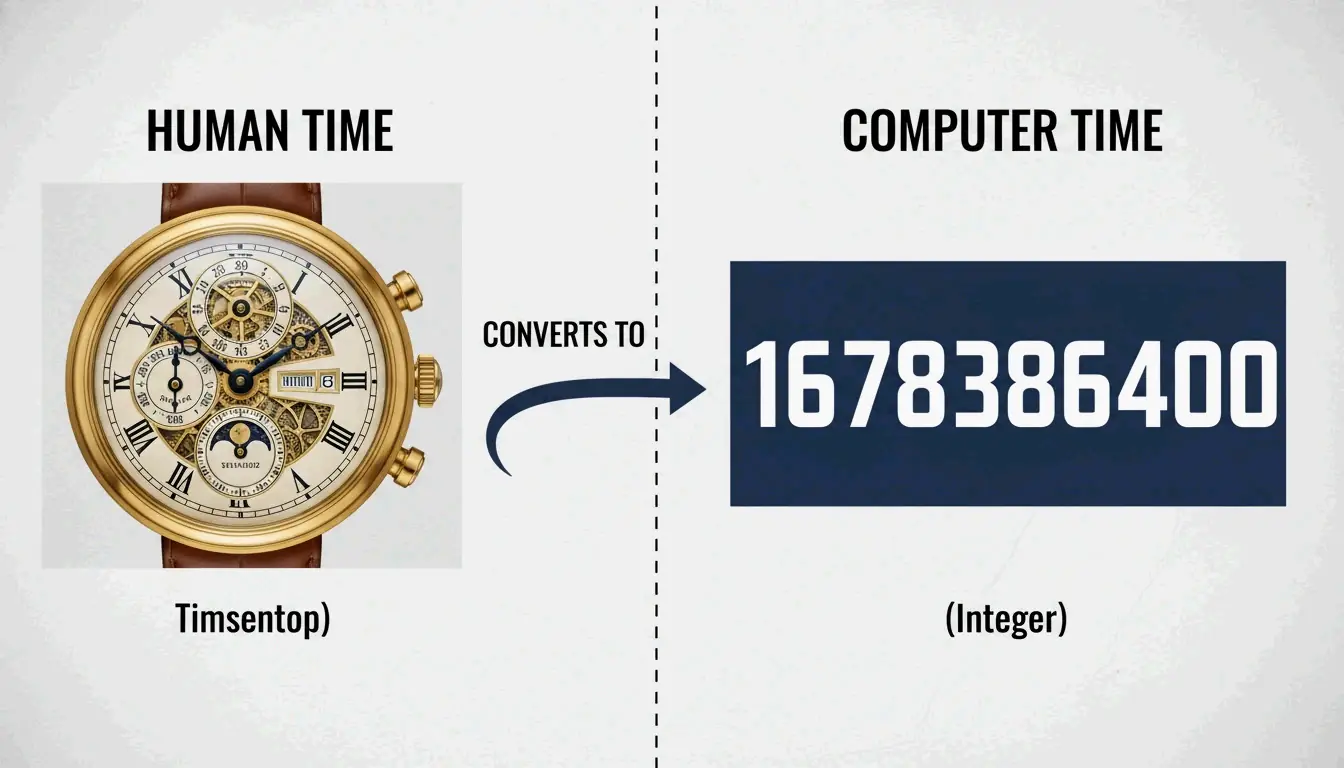

Think of Epoch time as a simple, linear counter. Computer systems use it to represent any specific moment in history as a single, large integer. While humans prefer dates with months, leap years, and time zones, computers find integers much easier to sort, compare, and store efficiently.

The foundation of this system is the Unix epoch. According to the POSIX.1 standard, this “starting line” is set at 00:00:00 Coordinated Universal Time (UTC) on January 1, 1970. Every second that ticks by adds one to the counter. For example, on May 6, 2026, the Unix timestamp is roughly 1,778,030,894, as tracked by TimeCal.net.

Since it relies on UTC (Coordinated Universal Time), Epoch time doesn’t care about time zones. A single timestamp means the exact same moment in New York, Tokyo, or London. This universal nature is why it acts as the hidden backbone for network protocols, file systems like ext4, and cloud-based databases.

The Mechanics: How Unix Time Ignores Leap Seconds

There is one technical quirk to keep in mind: how Unix time handles leap seconds. As noted on Wikipedia, Unix time isn’t a perfect 1:1 map of “atomic time” because it essentially ignores these extra seconds.

The POSIX.1 standard assumes every day has exactly 86,400 seconds. When UTC adds a leap second to stay in sync with the Earth’s rotation, Unix time usually repeats the previous second or “jumps” to stay aligned. While this works fine for most calendar apps, it might not be precise enough for high-level scientific work that requires sub-second atomic accuracy without a specialized leap second table.

The Developer’s Cheat Sheet: Converting Epoch Time in Modern Languages

The most common hurdle for developers is turning these long strings of numbers into something a human can read. Most bugs happen because of a simple mix-up: 10-digit vs. 13-digit timestamps.

As explained by UnixEpoch.net, a 10-digit timestamp counts seconds (standard Unix), while a 13-digit version counts milliseconds. If you treat a millisecond timestamp as seconds, your code might think the date is somewhere in the year 55,000.

Python & JavaScript Implementation

Different languages handle this differently. In JavaScript, Date.now() gives you a 13-digit millisecond timestamp. You’ll need to divide it by 1,000 if you want standard Unix seconds.

In Python, the datetime module is your best bet. According to PyTutorial, using datetime.datetime.now().timestamp() returns the time as a “float,” where the numbers after the decimal point represent microseconds.

Quick Conversion Guide:

- JavaScript:

Math.floor(Date.now() / 1000) - Python:

import time; int(time.time()) - Go:

time.Now().Unix() - SQL (MySQL):

SELECT UNIX_TIMESTAMP()

Handling Milliseconds vs. Seconds

When you’re debugging an API response, check the digit length first. TimeCal.net points out that backend languages like PHP and Go usually stick to 10-digit seconds. Meanwhile, frontend tools and Java often use 13-digit milliseconds for extra detail. Standardizing these to the time_t data type—the classic C-based integer for time—is the best way to keep different systems talking to each other correctly.

Will Time Run Out? The Year 2038 Problem (Y2K38) Explained

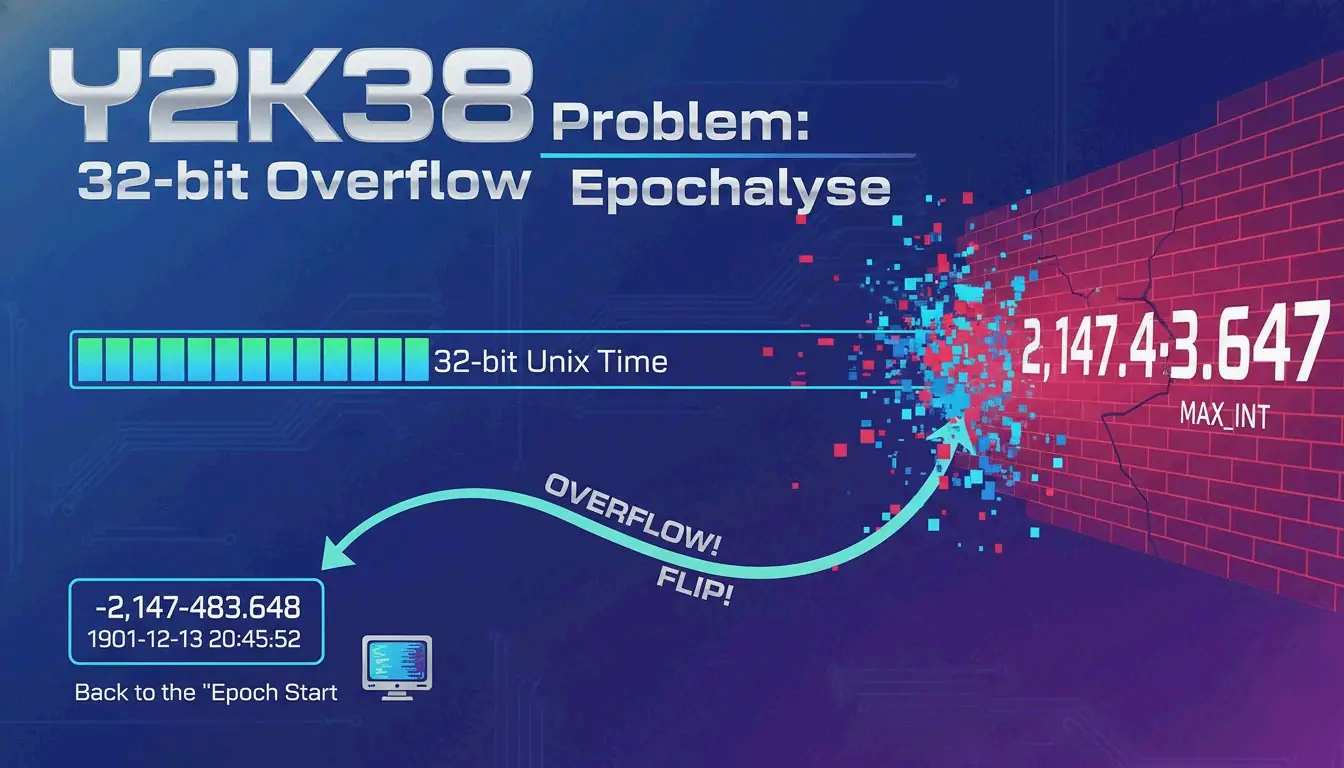

The Year 2038 problem, sometimes called the Y2K38 superbug or the Epochalypse, is a looming deadline for older computers. The trouble comes down to how these systems store the time_t value: they use a signed 32-bit integer.

A signed 32-bit integer can only go up to 2,147,483,647. Wikipedia notes that we will hit this limit on January 19, 2038, at 03:14:07 UTC. One second later, the counter will “overflow” and flip to a negative number, making the computer think the date is actually December 13, 1901.

Historical Context: The AOLserver 2006 Crash

This isn’t just a theory. According to Wikipedia, we saw a preview of this in May 2006 with AOLserver. The software had a “billion-second” timeout setting for database requests. When that billion seconds was added to the current 2006 date, the total went past the 2038 limit, causing the system to crash.

The 64-Bit Solution

Most modern systems have already moved to 64-bit integers to avoid this. A 64-bit Unix timestamp expands the range to roughly 292 billion years in either direction. As The Guardian puts it, that is more than 20 times the age of the universe—essentially a permanent fix for human timekeeping.

Leap Seconds and Unix Time: Why Your Clock Might Repeat Itself

While it rarely affects daily life, the way Unix time handles leap seconds can be tricky for high-precision tech. Because POSIX.1 insists every day is exactly 86,400 seconds, there is no official way to represent a “61st second” in a minute.

Visualizing Leap Second Repetition

When a positive leap second occurs, UTC time moves to 23:59:60. However, a standard Unix clock will often just repeat the timestamp for the first second of the next day.

| TAI Time | UTC Time | Unix Timestamp |

|---|---|---|

| 1999-01-01T00:00:31.00 | 1998-12-31T23:59:60.00 | 915148800.00 |

| 1999-01-01T00:00:32.00 | 1999-01-01T00:00:00.00 | 915148800.00 |

As the table from Wikipedia shows, the timestamp 915148800 becomes ambiguous because it refers to two different moments in reality. This “double-counting” can cause glitches in high-frequency trading or scientific logging where the exact order of events is everything.

Conclusion

Epoch time is the invisible engine of digital timekeeping. It provides a straightforward, number-based way to track time across the globe, even with its 32-bit history. By turning messy dates into a single integer, it allows everything from Linux servers to web browsers to stay in sync without timezone headaches. However, the legacy of 32-bit systems is a real risk as 2038 gets closer. For developers, now is the time to audit old code and ensure the switch to 64-bit integers is complete.

FAQ

What is the difference between 10-digit and 13-digit timestamps?

A 10-digit timestamp counts the seconds since the epoch and is the standard for most databases and backend languages. A 13-digit timestamp counts milliseconds, which is the default for JavaScript and Java when you need higher precision. To convert milliseconds to seconds, just divide by 1,000.

Can Epoch time represent dates before January 1, 1970?

Yes. Dates before the epoch are shown as negative numbers. For instance, Wikipedia notes that -31,536,000 represents January 1, 1969—exactly one year before the epoch started. Modern 64-bit systems handle these negative values easily.

Is ‘The Epoch Times’ newspaper related to Unix epoch time?

No, they aren’t related. The Epoch Times is an international media company and newspaper. Unix Epoch time is a technical standard used in computing. They happen to share a name but serve completely different worlds.